Modern society functions on information.

It’s the basis of much of the activity that takes place every day – from markets where price discovery depends on the kind of information used by participants to which treatment option we should choose.

By default, information is not value or bias free. It carries the views and opinions of the people giving it to us.

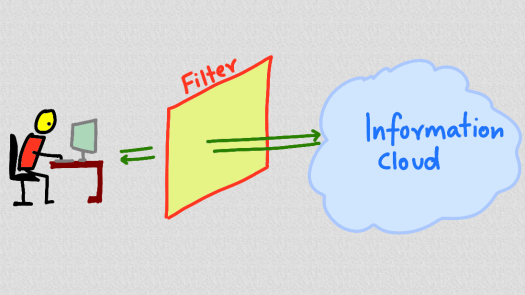

These days we are particularly susceptible to the biases built into the large tech companies that dominate the flow of information we get.

We look for information using a search engine. Social media filters and serves up what it thinks we will like. Recommendation engines on shopping sites suggest what we should read next.

This creates problems.

Safiya Umoja Noble writes about how racism and sexism is built into search engines in her book, Algorithms of Oppression.

We see a filtered set of results from what is out there, and nothing suggests that what we see is biased in any way or not representative of the larger information cloud.

Noble’s analysis shows this isn’t the case, and that the nature of the internet business and the companies that dominate it shows up in results that discriminate between people and races.

To some extent, the search engines show up a mirror to society – the algorithms are learning from what we do online.

We could see the results not as a brave attempt to classify and bring us the best of what is out there, but a reflection of how human society is right now.

And what that tells us is that we still have a long way to go in getting fairness and equality into global society.

Some people believe that the problem can be solved with more data and better learning algorithms.

That may well be the case, but right now we have problems with information and content on the internet that range from graphic displays of terrorism to online bullying.

The tech companies are starting their response by adding more humans into the process to help filter and curate appropriate content.

The volumes that are generated every day, however, means that bad stuff will inevitably get through.

To protect ourselves we need more than filters, we need frameworks to organise and question the information that gets through.

We need to learn to think for ourselves.