Thinking about the future is not easy.

As humans we fall prey to biases, and two in particular are important.

The first is hindsight bias where, looking back, we think that things that have happened were far more inevitable than they actually were.

For example a Trump victory seems like it was pre-ordained now – Hillary never stood a chance against the Twitter machine.

At the time, however, not many around the world seriously thought Trump would win.

The second is foresight bias – we believe some things are more likely to happen than others and so bet on them more heavily.

We need tools and methods to guard against these biases and reason about the future more effectively – and the military and intelligence establishments are a good source of information on these.

For example, this guide sets out a detailed approach to counterfactual reasoning, one of the tools every analyst should be able to use.

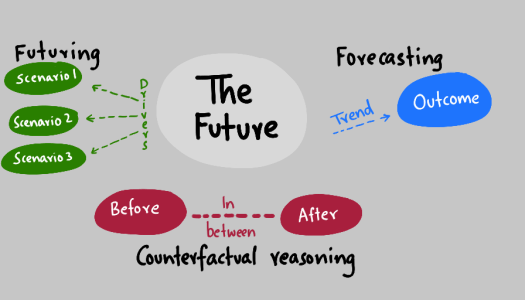

When we think about the future we often do one of two things.

1. We look at trends

We see trends and infer outcomes that result from those trends – a technique called forecasting.

For example, we might see a trend towards decentralised currencies with bitcoin or a trend towards widescale adoption of solar photovoltaic and distributed generation.

We forecast an outcome based on these trends – the end of traditional banking or energy firms.

2. We create possible futures

We do futuring when we look at drivers and come up with possible scenarios that might result.

For example, the widespread use of mobile phones will make desktop or offline services less relevant for things like getting media, checking mail and reading the news.

Counterfactual reasoning

Counterfactual means counter to the facts, and we reason that way by asking questions like “What if” or “If we”.

We can look at a problem in terms of antecedents and precedents – or before and after a fact.

Approaching a problem in this way has two benefits – it helps us explore cause and effects and it lets us be more creative.

For example, take a statement like the fall in the price of solar panels means that we will have widespread adoption in residential neighbourhoods.

That seems like a perfectly reasonable statement – but what happens if we break it down?

Should we start a solar panels sales business right now?

The before bit is a fall in the price of solar panels – which we see happening right now.

Cheap solar panels clearly lead to cheaper costs for the equipment.

But, does that alone justify the conclusion about what comes after – widespread adoption in residential neighbourhoods?

It does not – because we haven’t looked at the components in detail.

First, we need to examine why prices are low. Is it because the technology is getting better and cheaper, or is it because massive capacity increases in China are resulting in panels being dumped on the world market?

Then we need to think about the in-between – what may happen if what we predict takes place.

Low prices for panels don’t get around other problems – such as the connection constraints in neighbourhood, the other costs of installation such as scaffolding, and the possibility that high demand for installations coupled with low numbers of qualified tradespeople after BREXIT may result in bumping up the costs overall.

Then there is the after – new homes are very likely to have panels fitted – they can be designed in.

But will there be a rush by homeowners to retrofit panels or will they be put off by the up front cost and possible impact on sale prices?

If existing homes are slow to change, the overall rate of change will be slow because existing housing stock stays in place for decades so for everything to be replaced with new energy-efficient housing could take a century.

Summary

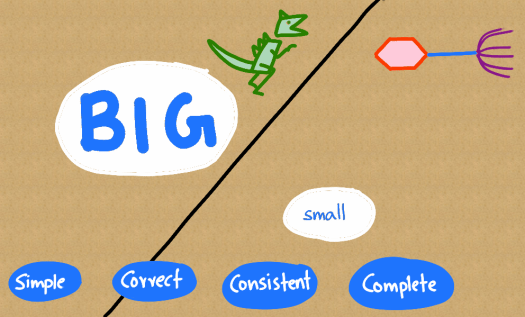

We can jump very quickly from what we see now to what we think will happen in the future.

The purpose of using analytic methods in a structured way is to help slow us down and examine the situation in more detail, coming to a more considered view on what may happen.

The conclusions we come to as a result may help us make better decisions.