After three years I have started finally paying for AI. Here’s why.

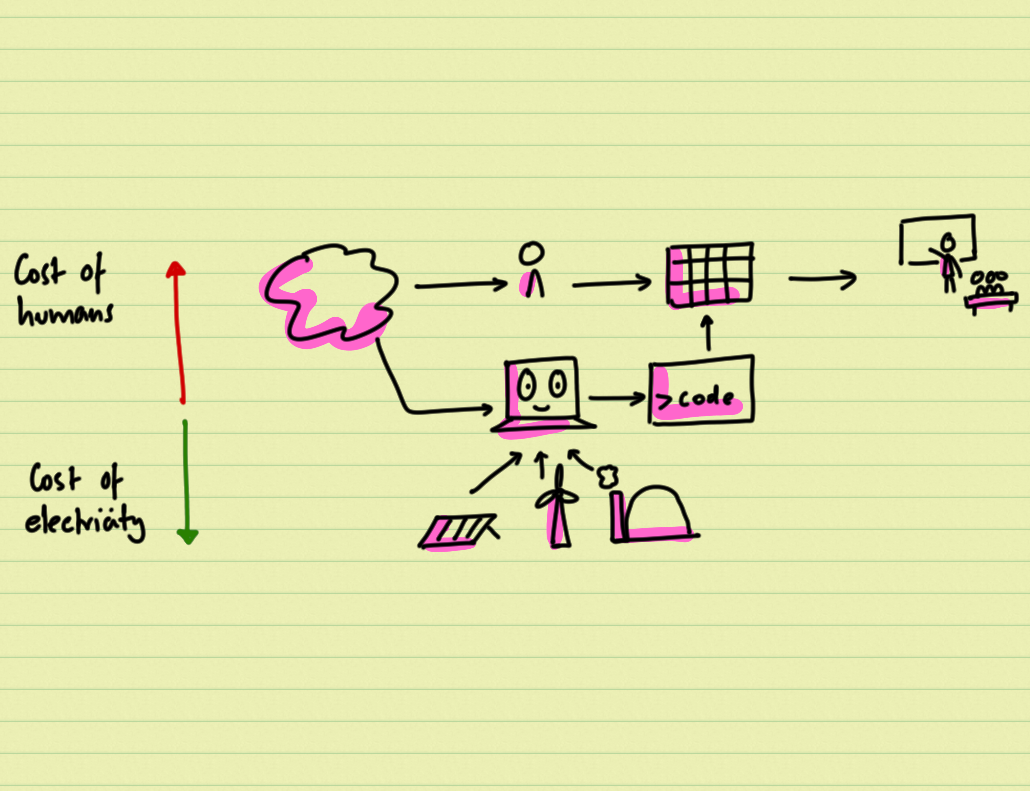

I’m taking a new business to market with co-founders, focused on solving the data management problem in sustainability.

With our previous businesses we quickly reached a point where we needed more hands to do the work – there were more tasks to do than we had time for.

But AI can now handle these tasks more quickly and competently in an enterprise context.

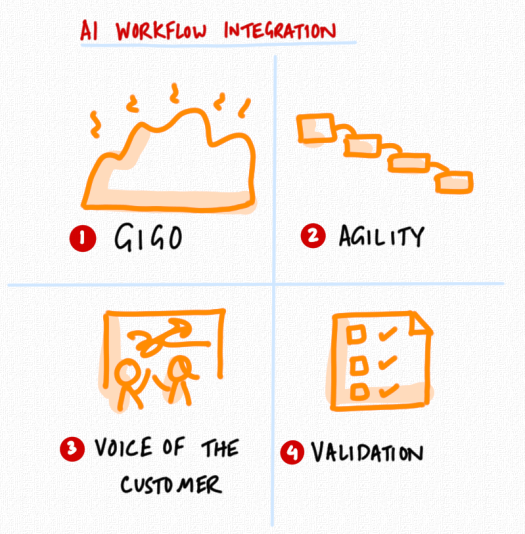

But there are four principles for deployment that are worth remembering.

- GIGO is still a thing

AI can do a lot, but it cannot unscramble your omlette. Give it good data, and it produces good output.

Give it garbage and it produces more work for you to fix.

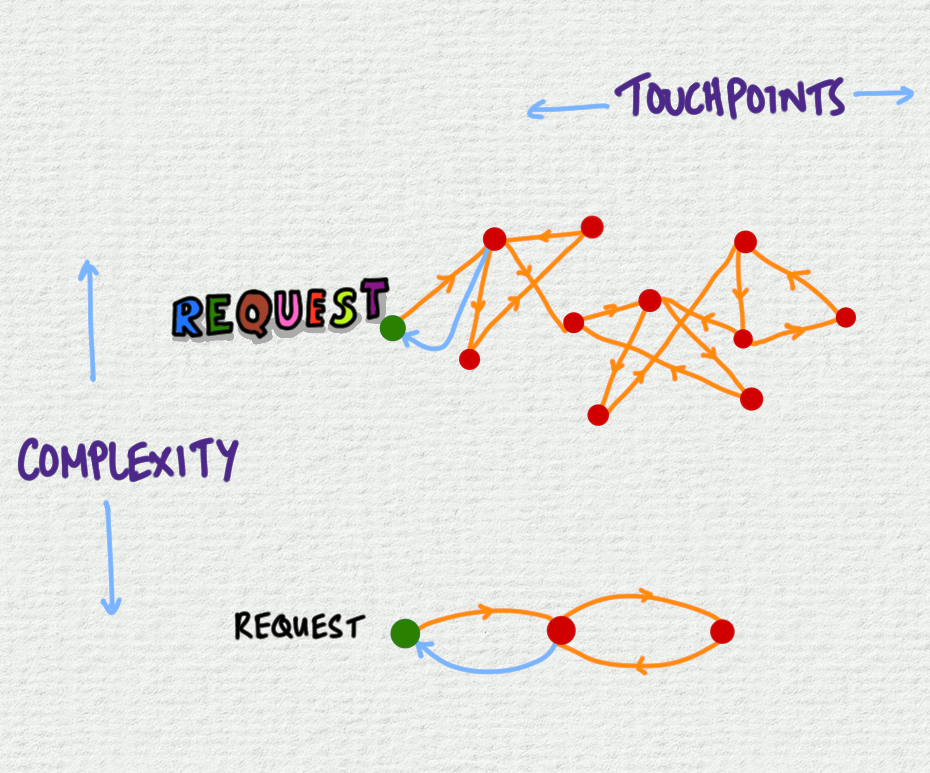

- Stay small to stay agile

It’s increasingly clear that you have to scope what you want carefully to get what you want. The bigger the scope, the less flexible it is.

Keep your projects small and contained. Then you’ll get the benefits of AI while staying agile.

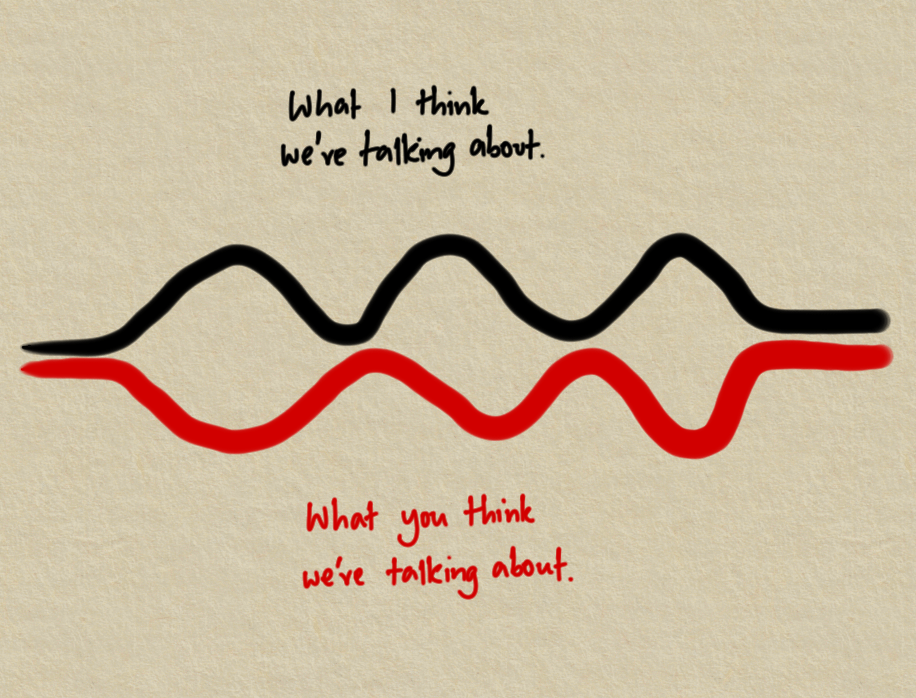

- Build what the customer needs

The time and costs of making stuff have gone down – unless you burn hours and tokens building things no one wants.

I’m spending 3-4 hours talking to customers for every hour of building – making sure I’m working on the right thing.

- Build quality into the process

AI works so quickly that there’s no time to inspect everything.

Create outputs that are easy to validate. Build in checks. Make it easy to see what’s going on.

A good valdation process is the way to build trust.

As a new(ish) firm, we have the flexibility to start from scratch and build a consultancy that leverages AI.

But it’s not about cutting costs.

It’s about building a better way to do good work for clients.