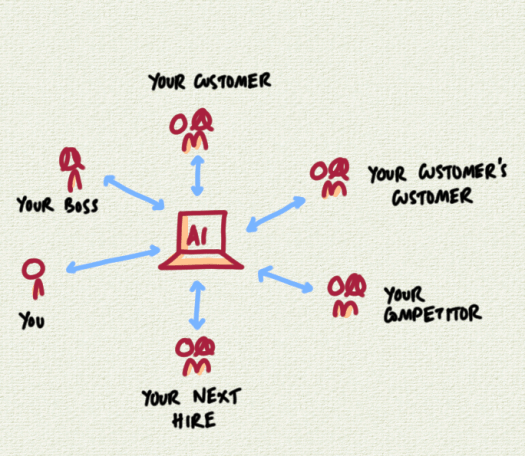

We’re using AI more and more, as it takes centre stage in work and business.

Let’s go through the list.

I’m sure you’re using AI to help you read, write, analyse and summarise information.

So is your boss.

Your customer is using AI before they talk to you.

As is your customer’s customer.

All your competitors are leaning into this.

And your next hire, they’re building resumes with AI and practicing interviews on AI avatars.

We’re doing this to be more productive. But it also creates a whole new category of risks.

The recent Anthropic row is a preview. It’s fallen out with the DOD, federal agencies have been ordered to stop using it, and it’s been labelled a “supply chain risk”.

Any use of tools like Claude could bar a company from defense contracts.

Will all this happen or will there be a negotiated resolution?

We don’t know yet – but even the risk that it could – that the AI tool that’s central to the use of so many organisations could be banned with the stroke of a pen – is going to cause concerns.

If you’ve spent the last year building your services around the use of Claude, what are you going to do next? Wait and see? Pivot?

When AI becomes core infrastructure – and is exposed to regulatory and geopolitical risk – we need new controls and mitigations.

What are they going to look like?