I came across a term recently that should guide how we use AI.

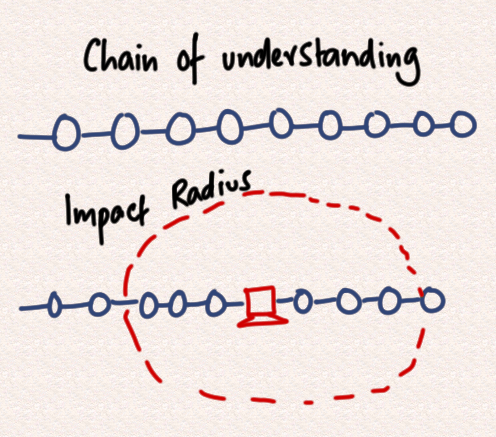

The “chain of understanding”.

If you watch cop shows, you’ll have heard of the chain of custody – the process that ensures evidence can’t be tampered with.

We need something similar for AI.

AI can generate huge amounts of content – but our ability to absorb and verify it hasn’t changed.

So do we really read and understand what it produces? Or do we trust that it’s right?

Yesterday, I asked two different AIs the same question. They gave two confident but contradictory answers.

That’s the risk.

In many contexts, choosing the wrong answer has an impact radius – affecting millions in investment and rippling through supply chains.

The issue isn’t speed.

It’s whether you understand the logic underpinning a decision or how a program actually works.

That’s the chain of understanding.

AI can generate answers. It can’t take responsibility for them.

If you can’t explain it, you probably shouldn’t act on it.