Are the rules of building sustainable competitive advantage changing?

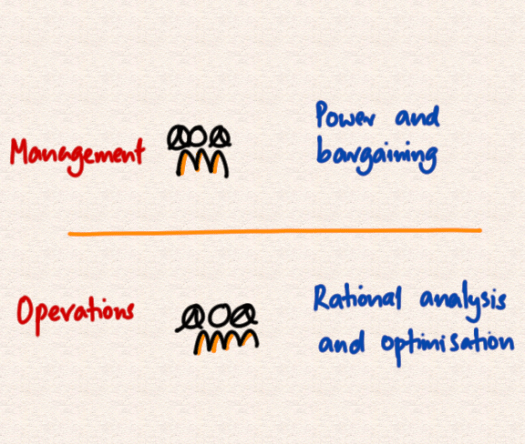

I’ve used the VRIO checklist for a decade now to check if I am on track.

- Value – Does the activity produce value for a client?

- Rare – Is it a hard to find capability?

- Inimitability – Is it hard to copy?

- Organisation – Do you have the operational system to deliver?

I use the checklist to help me identify, design and implement new service lines that will have a sustainable competitive advantage.

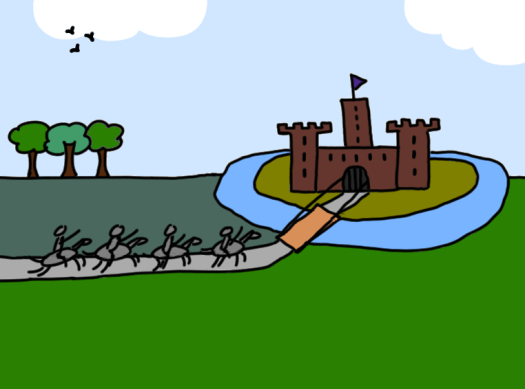

And that’s because a sustainable competitive advantage, better known as a moat, is what sustains profitability.

If you have a moat, you can have a margin. Without it, your selling price drops to the price of production.

You’ll see comments like the cost of intelligence will drop to the cost of electricity – that’s what happens to a selling price in a world of perfect competition.

Based on that one economics class I took a while ago…

The most important item on the checklist, for me anyway, is inimitability.

If what you do is hard to copy then it’s difficult to replace and your customers stay with you.

But is AI changing that? Surely you can make a copy of any business in an instant with AI?

The rapid introduction of AI tools that seemingly do everything can make it feel like the barbarians are at the gate – that everything is going to change and everyone is going to be out of a job.

Perhaps we should pause for a minute.

There is a story, possibly apocryphal, about the war in the South Pacific.

Soldiers came in, built airfields, planes came with goods, and a thriving economy sprang up.

The war ended, and the soldiers left.

The islanders wanted the economy to go on, so they kept the runways paved, built new buildings out of bamboo and preserved the look of the airfields.

But no planes landed.

The point is that if a service is simple and surface level – then it is under threat from AI, automation and replication.

If it’s layered and intertwined, with a lot of tacit knowledge involved, then it’s harder to replace.

If you want this wrapped up in a single line it is still – make it hard to copy what you do to create value.