Why is the energy business so heavily controlled and regulated?

Mostly, its history.

When you have a few large generators and millions of consumers, its big business – and that leads to operators trying to control markets which triggers political oversight, which inevitably leads to questions of control.

So what we have across the world is a system of generation, transmission and distribution over a grid system that connects where energy is made and where it is used, and a parallel system of metering and accounting to bill users.

Microgrids and peer-to-peer systems want to change that

Imagine a new housing development where the developers have decided to create a private network of electricity wires that connect the homes instead of using the cables and equipment provided by the grid.

There may be a few connections to the main grid, but the rest of the properties are effectively off-grid.

At the same time, each house has solar panels for electricity and hot water, excellent insulation, low running requirements and perhaps a micro-chp unit and battery storage.

The independent network forms a microgrid.

The existence of housing units with the ability to generate electricity and heat from a variety of sources and a population that uses energy creates a network of peers – equal participants.

The concept of peer is sometimes forgotten – the households of the future will be both producers and users of energy – so called prosumers.

What they need to work are markets

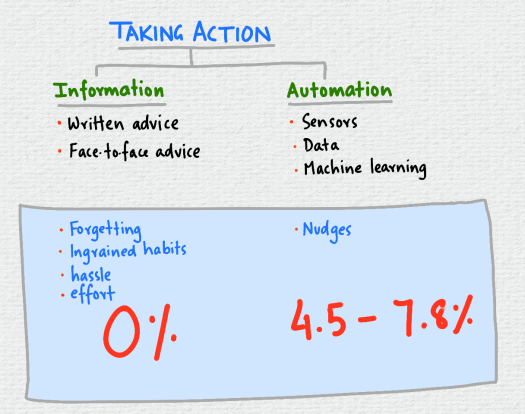

In a microgrid peer-to-peer system, there will need to be some way of keeping everybody happy – and that is done by a price system and a market.

If people are free to set prices (or the trading is automated and the machines trade among themselves) then the market will result in a price that matches supply and demand.

It avoids the cost of routing energy through the grid, so it should be cheaper.

Experiments like the Brooklyn microgrid set up by LO3 Energy are showing how this could be done.

A peer-to-peer network does not have to be part of a microgrid

We could have renewable generators, like a solar farm, connected to the grid that want to directly sell all their output to a user connected somewhere else on the grid.

They can currently enter into a bilateral contract that is settled and billed by a supplier.

A true peer-to-peer system could eliminate the need for a supplier, and simply have a separate contract – based for example on a contract for differences model – although these are still complex to create and agree on a one-to-one basis.

A start in this direction is Open Utility’s Piclo platform that matches users with local generators.

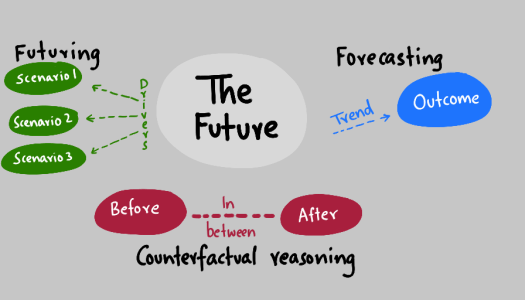

We are still in the early stages of a transition

We’re a long way away from having solar PV on every roof and local networks of users have yet to spring up.

Will there be a revolutionary peer-to-peer change, or is it likely that the majority of the system will still be controlled by a few producers.

If history is anything to go by – network effects and scale matter.

We may have lots of committed, small players, but Google style companies for energy will still probably emerge – a few highly connected hub players that aggregate and influence how everything else works.

We still operate in a winner-takes-all ecosystem, and peer-to-peer is a small part of it.

Will it be different this time?